To make your life easier, we’ve collected an (opinionated) list of some open datasets that you can’t afford not to know about in the AI world." This week, a few machine learning experts and I were talking about all this.

"Standard datasets can be used as validation or a good starting point for building a more tailored solution. "Most people in AI forget that the hardest part of building a new AI solution or product is not the AI or algorithms - it’s the data collection and labeling," Oliveira writes on StartUp Grind. The post, Fueling the Gold Rush: The Greatest Public Datasets for AI, includes links to the data with a legend and other context to help you quickly decide whether to download and analyze. For that reason, I draw your attention to an incredible stash of 30 data sets posted on StartUp Grind's Medium blog by Luke de Oliveira, a tech entrepreneur and visiting scientist at Berkeley Labs. There's a lot of interest in curated data sets that are already cleaned, labeled and ready to be mined. If you'd like to dig into open data on autophagy, check out What We Learned from Big Data for Autophagy Research on the National Institutes of Health, US National Library of Medicine.

Look up YouTube videos by Canadian nephrologist Jason Fung to learn more about it. During the coronavirus pandemic, I discovered the many health benefits of intermittent fasting, including a fascinating "cell recycling" system it triggers in us called autophagy. Below are sources (alphabetized) that my predecessor Jennifer Scott pulled together and I add to occasionally.

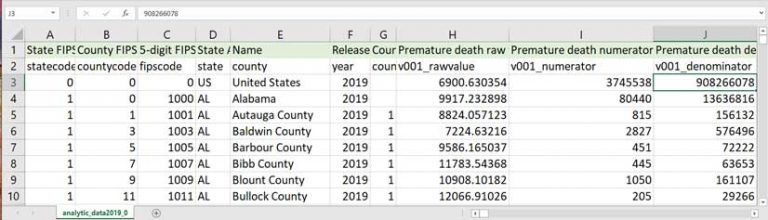

In general, those are either more complicated or less useful than the most common two, although certainly they are also acceptable solutions and not uncommon to see in practice.( Editor's note: Data sources were last confirmed and updated by * denotes most recently added data set.)īecause so many in academia need data for school, I keep an eye out for open sources. Some other methods include call execute, run_macro, or using file open commands to work with the file directly. There are other methods, but these two are the most common and should work for most use cases. Then %include those files instead of using the macro variable in the input datastep - yes, you can %include in the middle of a datastep. Take your data step, and instead of accumulating and then using call symput, write each line out to a temp file (or two). Second, you can surpass the 64k limit by writing to a file instead of to a macro variable. This has a larger maximum length limitation than the data step method (the data step method maximum is $32767 if you don't use multiple variables, SQL's is double that at 64kib). There are some ways you can improve this, though, that may take some of your worries away.įirst off, a common solution is to build this at the row level (as you do) and then use proc sql to create the macro variable. Yes, you could check things a bit more carefully I would allocate $32767 for the two statements, for example, just to be cautious. What you're doing is a fairly standard method of doing this. One could run into trouble with this approach if insufficient space is allocated to the variables storing the length and input statements, or if the statement lengths exceed the maximum macro variable length. This gets me what I need, but there has to be a cleaner way. * Construct LENGTH and INPUT statements */Ĭall catx(' ', lenstmt, colname, '$', collen) Ĭall catx(' ', inptstmt, colstart), colname, '$ &') There has to be a better way than my naive approach, which is to construct a length statement and an input statement using the imported metadata, like so: /* Import metadata */ I want every column to be character with the length specified in the metadata file. My goal is to have a SAS dataset that looks like this: Name | Age | Comments The names are listed in the order in which they appear in the data file. The file containing the metadata is tab-delimited with two columns: one with the name of the column in the data file and one with the character length of that column. That is, each column of data is aligned to a particular column of the text file, padded with spaces to ensure alignment. The file containing the data is a fixed-width text file. I'd like to use these two files to construct a single SAS dataset containing the data from one file with the column names and lengths from the other. I have two text files, one containing raw data with no headers and another containing the associated column names and lengths.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed